Federal authorities say a “critical safety gap” in Tesla’s Autopilot system contributed to at least 467 collisions. These collisions resulted in 14 fatalities and “many others” resulting in serious injuries. The findings come from a National Highway Traffic Safety Administration (NHTSA) analysis of 956 crashes where the Tesla Autopilot system was suspected to have been in use. This is the result of a nearly three-year investigation.

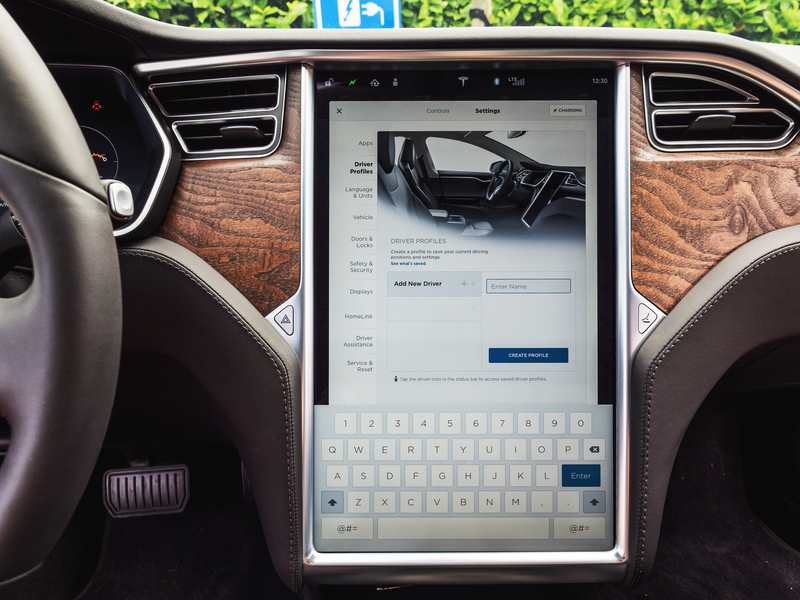

Tesla’s Autopilot design has “led to foreseeable misuse and avoidable crashes,” the NHTSA report said. The system did not “sufficiently ensure driver attention and appropriate use.” NHTSA’s filing pointed to a “weak driver engagement system,” and Autopilot that stays switched on even when a driver isn’t paying adequate attention to the road or the driving task. The driver engagement system includes various prompts, including “nags” or chimes, that tell drivers to pay attention and keep their hands on the wheel, as well as in-cabin cameras that can detect when a driver is not looking at the road.

According to the NHTSA Office of Defects Investigation data, 13 fatal collisions evaluated in the probe resulted in the deaths of 14 people. The is opening a new probe into the effectiveness of a software update Tesla previously issued as part of a recall in December 2023. That update was meant to fix Autopilot defects that NHTSA identified as part of this same investigation. The voluntary recall via an over-the-air software update covered 2 million Tesla vehicles in the U.S., and was supposed to specifically improve driver monitoring systems in Tesla’s equipped with Autopilot.

The report Friday May 24, 2024 said the software update was probably inadequate, since more crashes linked to Autopilot continue to be reported. One recent example included a Tesla driver in Snohomish County, Washington, who struck and killed a motorcyclist on April 19, according to records obtained by CNBC and NBC News. The driver told police he was using Autopilot at the time of the collision. The NHTSA findings are the most recent in a series of regulator and watchdog reports that have questioned the safety of Tesla’s Autopilot technology, which the company has promoted as a key differentiator from other car companies.

Tesla’s website says Autopilot is designed to reduce driver “workload” through advanced cruise control and automatic steering technology. Tesla has not issued a response to Friday’s NHTSA report and did not respond to a request for comment sent to Tesla’s press inbox, investor relations team and to the company’s vice president of vehicle engineering, Lars Moravy.

Following the release of the NHTSA report, Sens. Edward J. Markey, D-Mass., and Richard Blumenthal, D-Conn., issued a statement calling on federal regulators to require Tesla to restrict its Autopilot feature “to the roads it was designed for.” The Tesla owners manual website warns drivers not to operate the Autosteer function of Autopilot “in areas where bicyclists or pedestrians may be present,” among a host of other warnings. “We urge the agency to take all necessary actions to prevent these vehicles from endangering lives,” the senators said. Earlier this month, Tesla settled a lawsuit from the family of Walter Huang, an Apple engineer and father of two, who died in a crash when his Tesla Model X with Autopilot features switched on hit a highway barrier. Tesla has sought to seal from public view the terms of the settlement.

In the face of these events, Tesla and CEO Elon Musk signaled this week that they are betting the company’s future on autonomous driving. “If somebody doesn’t believe Tesla’s going to solve autonomy, I think they should not be an investor in the company,” Musk said on Tesla’s earnings call Tuesday. He added, “We will, and we are.” Musk has also made safety claims about Tesla’s driver assistance systems while refusing third-party reviews of the company’s data.

Automobile safety researcher Philip Koopman and Carnegie Mellon University associate professor of computer engineering, said he views Tesla’s marketing and claims as “autonowashing.” He also said in response to NHTSA’s report that he hopes Tesla will take the agency’s concerns seriously moving forward. “People are dying due to misplaced confidence in Tesla Autopilot capabilities. Even simple steps could improve safety,” Koopman said. “Tesla could automatically restrict Autopilot use to intended roads based on map data already in the vehicle. Tesla could improve monitoring so drivers can’t routinely become absorbed in their cellphones while Autopilot is in use.”

We represent people who are injured because of the careless and reckless acts of others. At the end of the day your case can only be settled one time and you need to know all of the facts beforehand. The reason that insurance companies have paid our clients in excess of $130,000,000.00 is that we get the facts and are not intimidated at the prospect of going to trial when insurance companies fail to offer full compensation. We help with serious injuries that require serious representation. We are the Law Offices of Guenard & Bozarth, LLP. Our attorneys have more than 60 years of experience specializing in only representing injured people. Call GB Legal 24/7/365 at 888-809-1075 or visit www.gblegal.com We would be honored to represent you!